I have this growing digital notepad that I’ve started writing in over the past year: It’s titled “Be a great PM”, it’s a collection of information and encouraging quotes from books, online articles and discussions with others, or just plain thoughts that came to my mind. It serves as a motivational guide and supporting source when I feel I’m not good at my job (I bet I’m the only one in this world to feel that way :)), am stuck or need to know how to move forward or away from a situation.

After revisiting it once again last week, I wondered if this is something I should share with the world, and fill it with some more words so others might be able to benefit from it. If you end up reading this post and find yourself going “Duh, I already know that” — I think that’s ok, as I believe it might help you and others remind yourself that you are not alone with some of the daily challenges you face as a PM.

Additionally (and still surprising to me), I’ve received about half a dozen personal messages from students via LinkedIn or Twitter over the last year asking me if I could chat with them about Product Management. Feeling still extremely honored, I’ve tried to setup calls with most of them, but of course, that’s time-consuming and we are all busy, right? Most of the students who approached me were female CS students eager to learn about product management. It reminded me of how I felt, coming from a mostly men dominated engineering world, trying to score my first PM job, and how useful it was to get real-life stories and advice from people around me. I felt happy that it was my time now to give back.

A little disclaimer before the actual post begins; just to be clear, this blog post won’t mention “user stories”, “backlog”, “roadmap”, “CEO of the product” or any “frameworks” to follow — this post is about all some of the interpersonal skills a product manager should possess and ideally practice in their daily role as a PM.

Making Decisions

Even if your team members don’t really tell you that directly, they want you to make decisions, even if they don’t agree. In the book “Making it right: Product Management for a Startup World”, I was backed by my belief that not everybody has to agree with every decision you make (as a PM). So while I reach and foster to build a collaborative environment with the team, I’ve come to understand that this means everyone gets a voice but not everyone can or should get to decide.

I always encourage everyone on the team to share their opinions and invite them to discuss their concerns and hopefully provide useful compromises if they don’t agree with something. It’s crucial that every team member I work with knows this; I will always listen and try to understand your point of view!

Don’t get me wrong, quite frequently, I’m not fully certain myself about the decision that I made but I normally have a gut feeling, and if you can calibrate this feeling with some (even very little) hard facts, it goes a long way.

I need to clarify though, barely do I get the opportunity to make a decision with perfect information. As a PM, you’ll need to learn to be ok with making decision with sometimes immature information.

Seth Godin said, “Nothing is what happens when everyone has to agree.” The product manager is there to make sure things happen and make the right decisions, which is most of the time not consequently the most accepted one.

I love working with my engineering manager, he keeps repeating what was brought to him by his manager who probably picked up the original quote from Jeff Bezos but adjusted it to: “Disagree but commit positively.” Brilliant!

I ❤ this quote so much, and I hope everybody can follow this simple but yet so powerful approach to any team interaction (in life). It also doesn’t hurt to remind people in the midst of a discussion about this quote.

Assuming you’ve made the wrong decision resulting in some sort of failure; figure out (hopefully before) how failure is being treated inside your company and, most importantly by your manager and upper management. It’s great if you get a feeling that the company you work for encourages failure. Do you feel comfortable and supported to propose a new feature or an entire product, knowing that management trusts your judgment and engagement to try your best to succeed, and if it doesn’t work out at the end, they won’t judge and are ok with it? Build trust and set clear expectations for your manager so they feel comfortable supporting you in your work and knowing you will try your hardest and best to succeed. (Thanks, Jeff + Nick :))

Respect & Team Dynamics

As I go through life, my daily goal is to be more mindful of people and situations around me, practice being reflective and introspective. Respecting people around me is as important as feeling respected. It’s extremely important, besides respecting individuals, to also respect their role and what their role entails, and most importantly understand their professional motivation.

It has happened so often in my professional career that I’ve heard co-workers complaining about specific departments, and that that specific team wouldn’t know what they are doing, or why are there doing xyz. To be honest, we should all at least try to put ourselves in their shoes and reflect for a moment: is there a good reason this person might be proposing xyz. Just breath for a second, think about it and give the other side a chance to explain themselves. Ask them, why do you want to do xyz. How does it make you succeed in your role, and better us as a team? Ask for the reason why they are doing this? What is their motivation? I hope it goes without saying that you should be careful to not make them feel they need to justify their role and come across as if you question their good intentions, don’t give them a hard time.

And of course, share your motivations as it relates to your role as well.

I know, you can’t choose who you get to work with, and I think it’s totally normal to encounter people in your career that you can work better with and the ones you can’t. Try as much as you can to reflect and understand why it is that this particular person has the power to push your buttons and figure out what these buttons actually are and mean. Be honest with yourself, listen carefully to your inner voice, and see if you can find out if you are intimated or just jealous because it’s something they do that you might be afraid of. I’m not certain where I picked this up first: you should try to focus on at least one good thing this person does, or can, and keep that in mind when interacting with them. I’ve tried it, it works 🙂

I noticed I feel very thankful when I get the chance to work with solution-oriented people. The idea is that it’s ok to poke holes, disagree or block on something but provide alternatives or actionable feedback, similar to “disagree but commit positively.”

Be the Glue

In order to be the glue, you’ll need to find out what sticks with the team, sometimes each team member needs different support. Overall though, I try to stick to the attitude that I will fill any gap where needed, that means in practical terms, get snacks for the team, or write a patch :); do anything you can to help the team succeed. If they need you to unlock something that you’d thought is not something you *should* be doing, leave your ego at the door, just do it!

Over-communicating and Sharing (beyond your team)

Repeating a “why are we doing this”, a plan, a goal, or the strategy goes a long way when working with cross-functional teams. Don’t worry sounding like a broken record (seriously, try to let this go!), e.g. I still chuckle a bit inside when I begin meetings by saying “The purpose of this meeting is…”, or e.g. “We are doing this because we believe we can move the needle on (e.g.) retention”. In addition to verbally articulating, put it in a shared document, Email it around, make it your channel topic in Slack 🙂 Repeat.

Practicing the “No”

I have to admit, I’m a people pleaser (well, who hates conflicts?) — and that makes it even harder to practice one of the most important skills a PM needs to perform on a regular basis: saying No. It’s still not easy for me but I’ve learned to do it more often and it got easier. And if it’s difficult for you, try saying “Not now”. This has worked for me in difficult situations.

Practice Fairness, Listen with Empathy and Compassion

In the book Making it Right: Product Management for a Startup World, the author breaks down what empathy means, and adds another word to it: Fair!

fair. adjective. free from favoritism or self-interest or bias or deception.

Concentrate on these words, and validate yourself against them when talking to your team members, and of course, interacting with your users. It can be difficult, as a PM, to take your own emotions and feelings out of the equation when making decisions, especially if you, yourself really believe you are the “typical” user.

When dealing with product decisions and what we think users would want, I try as best I can to void using the phrases like “I think…” “I would” —

Another great advice, stemmed from Stephen R. Covey’s observation is try to listen to understand and not to respond, especially if it’s criticism. You need to be able to take that criticism constructively and not personally, and turn it around to make a better product. I remember several years back during my first product kick, it took me a while to not take trolls or user comments personally. Behind each angry user or troll there are normally solid reasons for their behavior; sadly, they might just want attention, they want somebody who listens to them, and wants to be understood, or a user who really wants to use your product but is frustrated because it’s not solving their problem (that’s an interesting clue for you to try to understand if this might also be a problem for others, less vocal users).

This list by no means is complete — however, like I do, if you have a bad day and feel you are not doing a good job, review these points and I hope they’ll provide you with some comfort and reassurance that you are not alone.

I’m sure you are a good PM 🙂

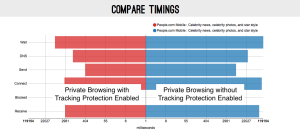

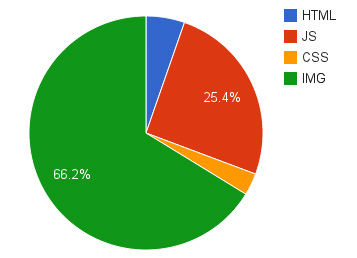

Bandwidth usage of various content types

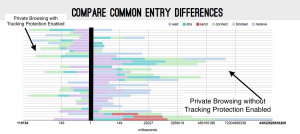

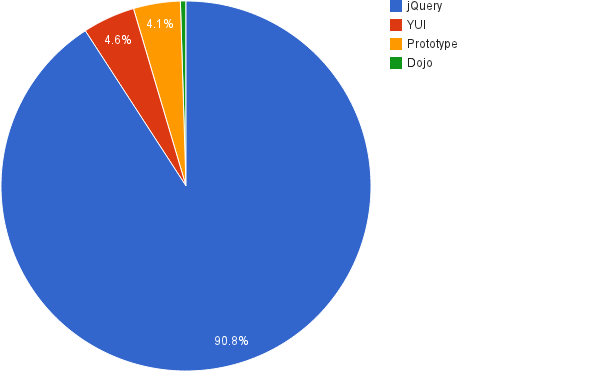

Bandwidth usage of various content types Most popular libraries

Most popular libraries