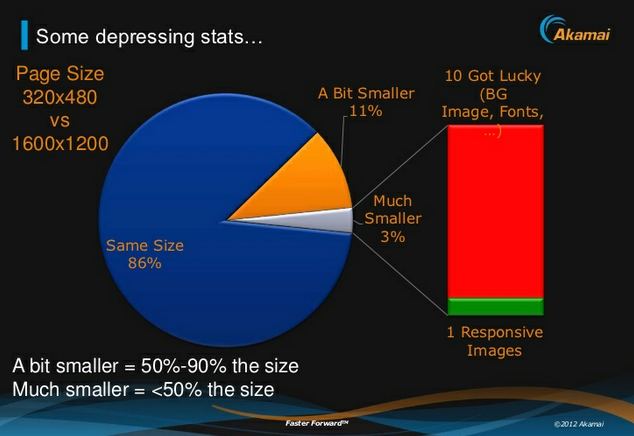

I read “mobile is not different” and I childishly take it personal – Why? – I dig deeper as if I was my own therapist – Why does it affect me the way it does – It feels like hopeful idealism and the need to generalize complicated things. Not a bad idea, but only if I could take that 2MB desktop site and serve it to all mobile users and think they would be happy…my job would be done! It’s not that easy, when developing web sites, you need to overcome many more obstacles for mobile than for desktop sites that are served on a high-speed, crazy powerful CPU device, a.k.a. desktop computer able to present any kind of content (text, image, audio or video). I wish I didn’t have to worry so much about performance, formats or codecs, I wish I didn’t have to think about latency and bandwidth issues, packet loss, data usage or energy consumption when delivering pages to small battery-driven devices, a.k.a. smart phones. I wish the performance budget we manifest for page size and load times would be the same for desktop and mobile, well on “one web”.

I am a big fan of consolidating so if we want to consolidate “everything web” into “one web” let’s consolidate the “proper” way then, make the “desktop” or call it “one” web follow the same strict rules that mobile or any other platform has to follow. Let’s clean up this mess (but my only wish), let’s do it properly this time please….!

What does “one web” mean to us? Deliver content to all devices, make it accessible and working for all platforms – Check, yes!

In order to get there, can we just pick one platform, develop for it and assume all the other ones will just magically start working? – Check? Unfortunately no.

Technically and realistically, I have a problem wrapping my head around this term of “there is only one web”, “mobile is not that different” or “there is no mobile web”. It is though, unless I am misunderstanding what “one” means.

I think we all perceive the notion of “one web” differently, depending on what issues we want to solve and what job title we own at present time. For example, a performance advocate would say “One web – I wish one common web to develop for, no screen real estate issues, load time or page sizes issues when developing for mobile devices! Yah!” But that’s not the case. You have way more flexibility and allowance on browser with an high-speed home internet connection. Let’s continue, if you ask the sponsor of the site or a content strategist, they’d say “yeah, one web, I want e v e r y t h i n g and I want it e v e r y w h e r e” – Is that what people mean by “one web”?

I’m not sure if we can generalize so much. Think about it, how often do we use a 3G connection on our desktop browser? Or for example, data plans for cell phones (in Canada at least) cost a lot, and going over the usage allowance by e.g 100MB costs you way more on your mobile data plan than going over 100MB on your high-speed home internet package. Also, we can’t make use of touch gestures on a desktop computer (yet) the way they come in handy e.g when swiping through photos on a mobile device.

Also, what about context aware strategies and sites. While your big iMac might want to show you all 35000 clothing items served as high-resolution photos, as a data plan payer, I would appreciate if the smart developers would not send those to my mobile device because all I want to do at the bus stop is to see where the nearest stores is and their opening hours.

One web?

Stephanie Rieger has talked about the trouble with context before. And how does “Mobile First” fit in here? Does that mean that Luke wrote a whole book for nothing if mobile is not different or should be a platform to value anymore?

It’s about adaption, no?

Let the experts comment, the W3C Mobile Web Best Practices talk about “one web” as follows:

One Web means making, as far as is reasonable, the same information and services available to users irrespective of the device they are using. However, it does not mean that exactly the same information is available in exactly the same representation across all devices. The context of mobile use, device capability variations, bandwidth issues and mobile network capabilities all affect the representation.

Hands down – I totally agree with that.

I would love to not make an iWatch (?) or iPhone or a SmartTV “different” when it comes to designing and developing web experiences for them but unfortunately they come with different challenges to deliver the same web content. When developing for touch devices you might want to use gestures, maybe you don’t want to load those gestures for your non-touch SmartTV. Your UX might break because your web site design and screen reale state rely on gestures. Your iMac might not need to know where you’re physcially at right now unless you’re taking it with you (it’s so pretty I know) while passing a Starbucks store that has free “grande iced half caf triple mocha latte macchiato” today. Do you think you would want to know about this offer while sitting with a glass of red wine, at night, at home?

May I take a stab at this and rephrase this a bit. There is the web and it is accessible and part of more and more devices (toaster, I look at you, yes I am waitin’ ….), all different in their setups, browsers, their connection limitations, their size, their CPU. Of course you need to adapt and adjust the web to each and every platform because they are different but sometimes also share characteristics. The user experience of viewing a website on a 320px wide screen in contrast to an 50inch screen is different. I believe you still have to meet different requirements at least from a design perspective.

Or even see it the other way around. Value the differences and benefit from them. Mobile devices are smarter than desktop devices. Use their advantages to your own advantages, why wouldn’t you?

I am not saying that anybody I’ve mentioned or cited in this post is wrong, we all have different perspectives and problems to solve. On that note, I’d appreciate constructive criticism because in my opinion, that only shows that we actually end up caring and worrying about the same issue, don’t you think? It’s a good thing.

In an ideal world, the web just works everywhere and serves everything but I fear we are not there yet. We sure can work towards that by creating solid back-end systems with solid and structured content and media sources that each and every platform can pull from whenever and however they want.

It’s not that “mobile is not different”, it is that “platforms are different” or “mobile is just another platform”.

Sources:

- http://venturebeat.com/2013/03/27/heroku-mobile-lead-mobile-is-not-different/

- http://www.w3.org/TR/mobile-bp/#OneWeb

- http://www.the-haystack.com/2011/01/07/there-is-no-mobile-web/

- http://www.forbes.com/sites/anthonykosner/2012/05/03/seven-deadly-mobile-myths-josh-clark-debunks-the-desktop-paradigm-and-more/

- http://www.lukew.com/ff/entry.asp?933

- http://www.lukew.com/ff/entry.asp?1393

- http://www.slideshare.net/yiibu/the-trouble-with-context